Polymarket faced backlash after allowing bets on the timing of a rescue mission for a downed U.S. Air Force officer, leading the platform to remove these wagers amid congressional concern.

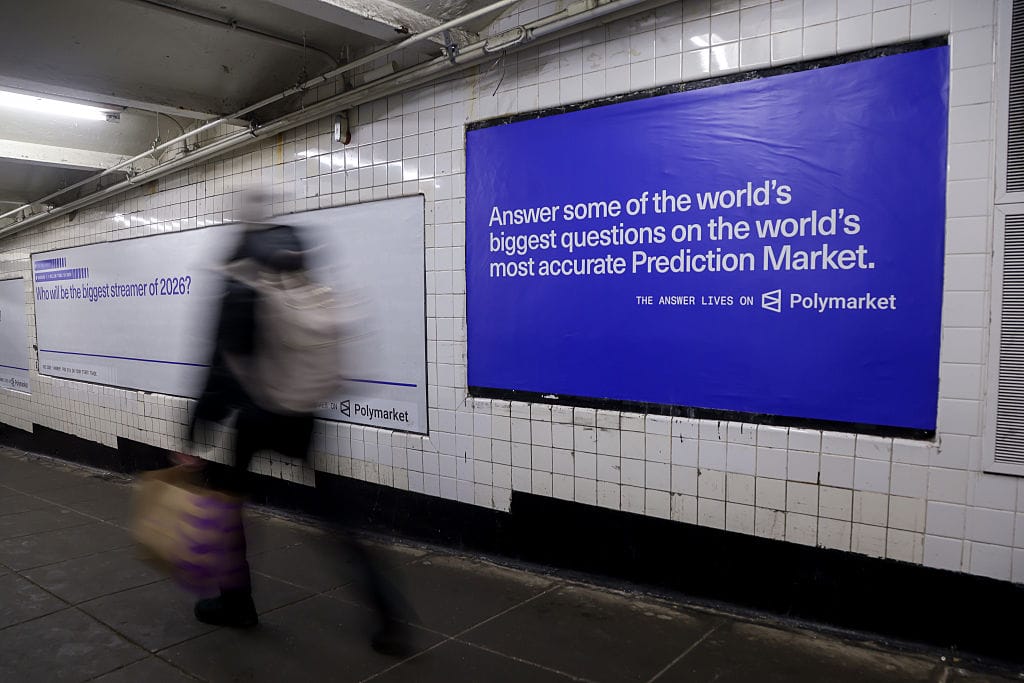

Polymarket, a decentralized prediction market platform, recently took down wagers connected to the rescue of Air Force service members who were shot down over Iran. The platform had permitted users to place bets on the date when the U.S. government would confirm the rescue, sparking immediate controversy. A Democratic congressman publicly criticized the site for enabling speculation on such a sensitive and ongoing military operation.

The incident raises difficult questions about the boundaries of prediction markets, especially when real-world events involve human lives and national security. While platforms like Polymarket have gained traction for forecasting political outcomes and economic events, this latest episode illustrates the risks that come with automating wagers around highly sensitive geopolitical developments.

For executives and business leaders following the evolution of decentralized finance and automation, the situation underscores the need for careful governance and ethical frameworks. Polymarket’s decision to remove the bets after public scrutiny demonstrates a reactive approach to content moderation, which may not be sustainable as these platforms scale and diversify their offerings.

This development also highlights broader concerns about the role of technology in amplifying potentially harmful speculation. While automation and smart contract-based platforms like Polymarket and OpenClaw offer unprecedented efficiency and transparency, they also introduce challenges in balancing freedom of expression with responsibility.

Anthropic’s AI technology Claude, which is increasingly integrated into automation tools, reflects a parallel trend in business: leveraging advanced AI to improve decision-making and operational efficiency. However, as seen with Polymarket, the deployment of automation and AI-driven processes requires robust oversight, especially when outcomes affect public trust and sensitive matters.

Business operators and founders should watch this space closely, as regulatory scrutiny and public expectations evolve around the use of automation in markets tied to real-world events. The Polymarket case serves as a cautionary example of how innovation in prediction markets must be paired with ethical guidelines and responsive moderation policies.

Looking ahead, the intersection of platforms like Polymarket, AI tools like Claude, and automation frameworks such as OpenClaw will continue to shape how companies approach risk, compliance, and user engagement. Ensuring these technologies are deployed thoughtfully will be critical to maintaining credibility and avoiding reputational risks in an increasingly interconnected digital economy.

Polymarket’s recent removal of wagers related to the rescue of a downed Air Force officer underscores the complexities that prediction market platforms face when dealing with sensitive geopolitical events.

As decentralized platforms like Polymarket continue to expand their offerings, they inevitably encounter situations where the ethical and operational boundaries of automated betting become blurred. The controversy surrounding these specific wagers highlights how prediction markets can inadvertently intersect with real-world crises, raising questions about the adequacy of existing governance mechanisms. For business leaders observing the intersection of decentralized finance and automation, this incident serves as a reminder that rapid technological innovation must be accompanied by deliberate oversight to address reputational and regulatory risks.

Moreover, the challenges faced by Polymarket resonate with broader trends in automation and AI-powered decision-making, including developments related to platforms such as OpenClaw and AI systems like Anthropic’s Claude. While automation can enhance efficiency and transparency in market operations, it also demands rigorous ethical frameworks to mitigate unintended consequences. Founders and executives should consider how these evolving technologies might impact their industries, especially as regulatory scrutiny intensifies and public expectations for corporate responsibility grow. This episode illustrates the ongoing balancing act between innovation and accountability in emerging digital marketplaces.

Polymarket’s removal of certain wagers reflects broader market sensitivities around automation and ethical boundaries in emerging prediction platforms.

From a market perspective, Polymarket’s decision to take down bets linked to the rescue of the downed Air Force officer highlights the delicate balance decentralized platforms must maintain between innovation and regulation. As these platforms automate the trading of event outcomes, they increasingly encounter scenarios where market activity intersects with sensitive geopolitical and human rights issues. This incident signals to investors and operators that governance frameworks will be critical to managing reputational risk and legal scrutiny, especially when markets touch on national security or humanitarian crises.

Moreover, the episode may influence how emerging automation tools like OpenClaw are deployed in the prediction market sector. Platforms leveraging smart contracts and AI integrations—potentially powered by systems like Anthropic’s Claude—will need to incorporate real-time ethical checks and content moderation to avoid amplifying speculative bets that could provoke public backlash or regulatory intervention. For executives and founders in decentralized finance and AI-driven marketplaces, this event serves as a cautionary example of the challenges in scaling automated trading models while preserving trust and compliance in complex, real-world contexts.

Leave a Reply