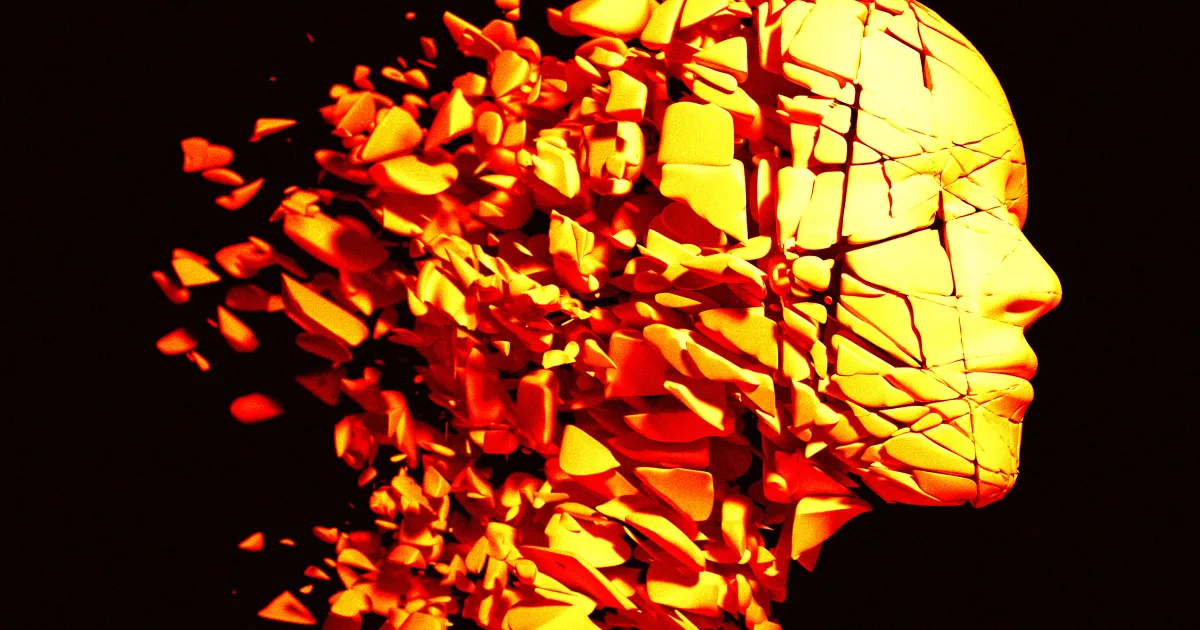

There is a story about AI that nobody tells at keynotes, because it is not the one that drives adoption. It shows up, instead, in research papers, in quiet admissions from heavy users, in the performance of juniors who have never worked without a chatbot, and in the slowly dawning suspicion among experienced professionals that they are not as sharp as they were two years ago.

Sustained, daily use of AI chatbots appears to be measurably degrading the cognitive skills those same chatbots were supposed to amplify. Synthesis. Argument. Recall. The ability to hold a problem in your head long enough to solve it without outside help.

Why this one is different from GPS

The standard pushback on any “technology is making us dumber” argument is that we’ve heard this before. Calculators didn’t destroy math. GPS didn’t destroy navigation. Google didn’t destroy memory. Humans are fine.

That is mostly right, and also missing the specific shape of what is happening now.

GPS eroded one narrow skill – remembering routes – that most people used intermittently. The cost was tolerable because navigation was a small, bounded chunk of cognition. When we lost some of it, the rest of thinking was unaffected.

Chatbots erode something different. They erode the general-purpose scaffolding of knowledge work: the motion of taking half-formed ideas, pressing against them, noticing where they break, revising them. That scaffolding is not a single skill. It is the substrate you use to do almost everything white-collar.

When GPS atrophies, you still think fine; you just don’t know where you are. When the scaffolding atrophies, you still know things; you just can’t reliably assemble them into a point of view without outside help.

What actually gets worse

The empirical pattern, emerging from the studies so far and matching what heavy users notice in themselves

Synthesis drops first. The capacity to take five messy inputs and compress them into one coherent theory is a practiced skill. Off-loading it to a model for six months feels like a productivity gain. Off-loading it for two years feels, when you try to do it unaided, like reaching for a muscle that’s gone soft.

Argument construction follows. Building a chain of reasoning – premise, step, step, conclusion – without a crutch requires holding several moves in working memory simultaneously. When that has been outsourced regularly, the holding becomes harder. You find yourself typing out thoughts to the model instead of completing them in your head, not because you can’t, but because the completed version now feels less obvious than it used to.

Error detection degrades. This one is the most dangerous. When you stop writing things yourself, you stop calibrating to how true things sound in your own voice. Then you stop noticing when an AI answer is confidently wrong. The failure mode is not that you are fooled by obvious lies; it is that you stop doing the silent check that used to catch the subtle ones.

Recall thins out. Not in the trivia sense. In the “I used to be able to hold this entire client’s context in my head during the meeting” sense.

The productivity-capability paradox

Here is the uncomfortable part. All four of those skills can decline while your productivity numbers go up, and they probably will.

A knowledge worker using AI heavily is measurably faster at tasks. More output per hour, more emails cleared, more briefs summarized, more code generated. On the scoreboard, they are winning.

What the scoreboard doesn’t capture: what happens when the tool is down, when the context is weird, when the problem is genuinely novel, or when the decision requires judgment that doesn’t reduce to a prompt. In those moments, the worker who practiced the hard skills beats the worker who outsourced them – consistently, visibly, and exactly when it counts.

Organizations are about to learn this lesson, slowly and expensively, as their most AI-reliant staff start to visibly under-perform on the hardest problems while still crushing the metrics dashboard.

What the actually-good AI users are starting to do

A quiet practice is forming among senior professionals who use AI heavily and don’t want to decay. None of it is glamorous.

Draft before you prompt. Write your own answer first – even badly, even in a bullet – and then ask the model. Compare. The delta is the learning. Skipping the draft is skipping the training rep.

Use AI to attack your thinking, not to replace it. “Here is my argument. Find the strongest counter” preserves your reasoning muscle. “Write an argument about X” does not.

Own the final edit. Never ship unreviewed AI text with your name on it. Not for legal reasons. For cognitive reasons. The review is where your judgment stays calibrated.

Protect one domain. Pick something you refuse to off-load – writing, math, analysis, one specific kind of decision – and keep doing it unaided. It is a gym membership for your brain.

Teach juniors the hard way first. This one is about the people who come after. Juniors who learn the skill before the shortcut are durable. Juniors who learn the shortcut first cannot recover the skill later – they have never had the thing the shortcut replaces.

The part institutions haven’t caught up to

Schools, universities and employers built their evaluation systems on the assumption that output reflects capability. That assumption is already broken. A student turning in a strong essay in 2026 may have excellent judgment, or may have excellent prompts. The output does not distinguish.

Expect, over the next two years, a return of something that looked like it was permanently retired: unaided, observed work. Timed essays. In-room coding. Oral exams. Whiteboard synthesis. Not as hazing. As the only remaining way to see what a person can actually do.

Employers will do the same thing, and they will feel guilty about it, and they will do it anyway. “Show me how you think about this without a model” is going to be a common interview prompt by 2027.

The real case for being careful

None of this is an argument against AI. The productivity gains are real and not going back. The case is narrower and more honest: the cost is real too, it is cognitive, it is cumulative, and it doesn’t show up on a quarterly dashboard.

People who use AI every day and stay sharp will do so on purpose. The rest will slide, slowly, into a version of themselves that is faster at the easy work and markedly worse at the hard work – and will not notice the trade-off until the hard work is all that’s left.

Related reading

Source: Futurism – Concern Grows That AI Is Damaging Our Ability to Think

More Stories

The Real Reason OpenAI’s New Image Model Is a Threat to Midjourney (It Isn’t Quality)Apr 22, 2026

The Real Reason OpenAI’s New Image Model Is a Threat to Midjourney (It Isn’t Quality)Apr 22, 2026 An Employee Gave One AI Tool Full Access to Google Workspace. That’s How Vercel Got Hacked.Apr 22, 2026

An Employee Gave One AI Tool Full Access to Google Workspace. That’s How Vercel Got Hacked.Apr 22, 2026 Jeff Bezos Just Quietly Built a Fourth Frontier AI Lab – and Nobody Has Seen ItApr 22, 2026

Jeff Bezos Just Quietly Built a Fourth Frontier AI Lab – and Nobody Has Seen ItApr 22, 2026 Amazon Doesn’t Need a Winning AI Model. It Needs Every Winner to Run on AWSApr 22, 2026

Amazon Doesn’t Need a Winning AI Model. It Needs Every Winner to Run on AWSApr 22, 2026

Leave a Reply