Anthropic faces a significant challenge as over half a million lines of the Claude Code CLI source code have been inadvertently exposed via an unsecured map file, stirring industry-wide implications.

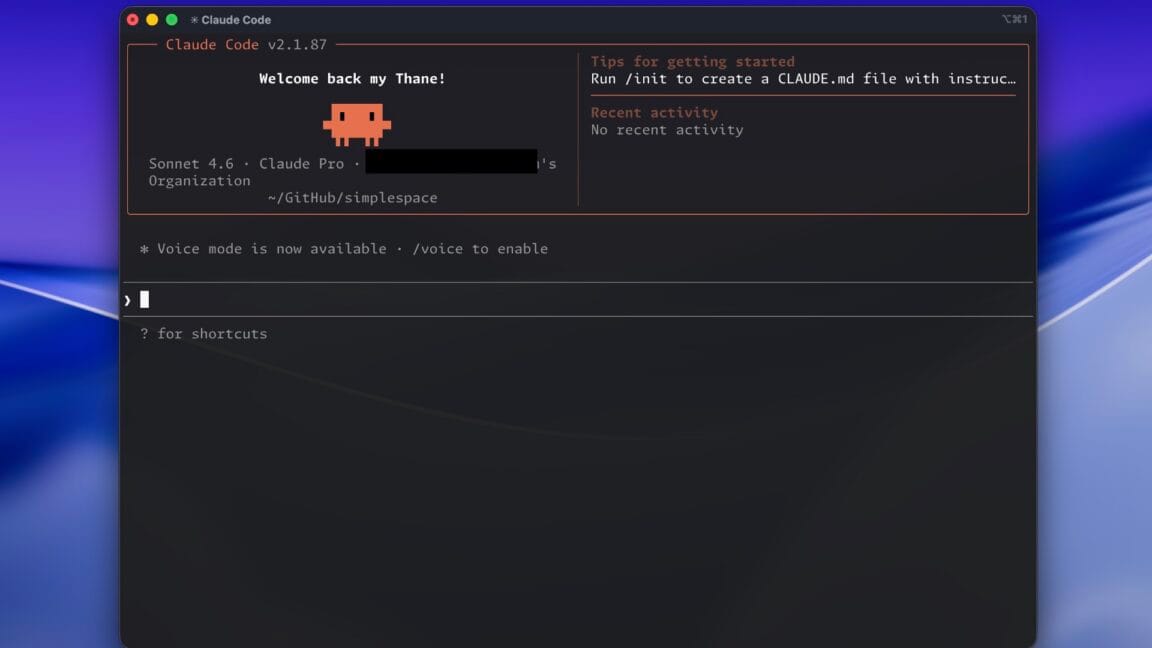

On March 31, 2026, Ars Technica reported a significant security incident affecting Anthropic, the AI company behind Claude, following the leak of the complete Claude Code CLI source code. The leak, which amounts to approximately 512,000 lines of code, originated from an exposed map file that was accessible publicly, allowing competitors, security researchers, and hobbyists immediate access to the proprietary codebase.

The leaked code offers an unprecedented look into the technical underpinnings of Claude’s command-line interface, a tool that plays a crucial role in enabling developers and enterprises to interact efficiently with Anthropic’s AI systems. This exposure threatens not only Anthropic’s competitive advantage but also raises broader concerns about intellectual property security in the fast-evolving AI landscape.

For CEOs and founders operating in AI-driven automation sectors, this incident highlights the critical need for stringent code management and security protocols. With the AI field’s rapid growth, the risk of leaks or unauthorized access to source code can undermine years of research and investment, potentially accelerating rivals’ development cycles or enabling malicious exploitation.

This leak may also have ripple effects for adjacent companies, including Polymarket and OpenClaw, which are active in leveraging automation and AI in their business models. Polymarket’s focus on prediction markets and OpenClaw’s automation tools rely heavily on maintaining technological edge and trust in their platforms. An incident like this serves as a cautionary tale about the vulnerabilities even well-established AI companies face.

Anthropic has not yet publicly detailed the scope of the breach’s impact on their operations or client data, but the immediate priority will unquestionably be damage control and fortifying security measures. In addition to protecting their source code, Anthropic will need to reassure partners and users about the integrity and confidentiality of their AI services.

Looking ahead, executives should consider tightening oversight on software deployment and storage, especially when handling critical AI infrastructure. The incident underscores that automation and AI companies must invest equally in cybersecurity as they do in innovation to safeguard their assets and maintain market trust.

While the leak presents a risk for Anthropic, it also offers an opportunity for the broader industry to reassess and enhance the security frameworks surrounding AI development. Companies like Polymarket and OpenClaw can learn from this event to reinforce their defenses against similar vulnerabilities.

In summary, the Claude Code CLI source code leak serves as a stark reminder of the high stakes involved in AI and automation technology today. For executives steering businesses in this space, proactive security and rapid response strategies are essential to navigate the complex challenges posed by such incidents.

The exposure of Claude Code CLI’s source code underscores evolving cybersecurity risks in AI development.

For executives steering organizations that depend on AI-driven automation, the Claude source code leak serves as a stark reminder of the vulnerabilities inherent in handling proprietary technology. Anthropic’s inadvertent public exposure of over half a million lines of code through an unsecured map file not only threatens their intellectual property but could also accelerate innovation cycles for competitors who now have unprecedented insight into Claude’s architecture. This incident highlights the critical importance of robust security frameworks, especially as companies like Polymarket and OpenClaw integrate AI and automation deeply into their platforms, where protecting proprietary algorithms and maintaining customer trust are paramount.

Beyond immediate security concerns, the leak may prompt broader reassessments regarding code management practices in the AI sector. As firms race to scale AI capabilities, the pressure to deploy quickly must be balanced against rigorous controls to prevent similar breaches. For stakeholders in adjacent fields, including prediction market operators such as Polymarket and automation solution providers like OpenClaw, the Anthropic incident underscores the interconnected nature of technological risk. Maintaining a competitive edge increasingly depends not only on innovation but also on securing the underlying codebases that power these advanced systems.

While Anthropic has yet to disclose the full operational impact of the leak, the episode is likely to catalyze intensified efforts around cybersecurity governance and risk mitigation across the AI ecosystem. For business leaders, this serves as a prompt to evaluate their own vulnerabilities in source code exposure, third-party integrations, and employee access controls. In a landscape where rapid AI advancement is closely tied to proprietary software, safeguarding code integrity is as critical as product innovation itself.

Leave a Reply